We examined thousands of open-ended ChatGPT prompts, matched each one to ground-truth data on whether a Shopping card appeared, and built a system to reverse-engineer the trigger. It predicted ChatGPT's behavior with ~95-96% accuracy.

Here's what we found:

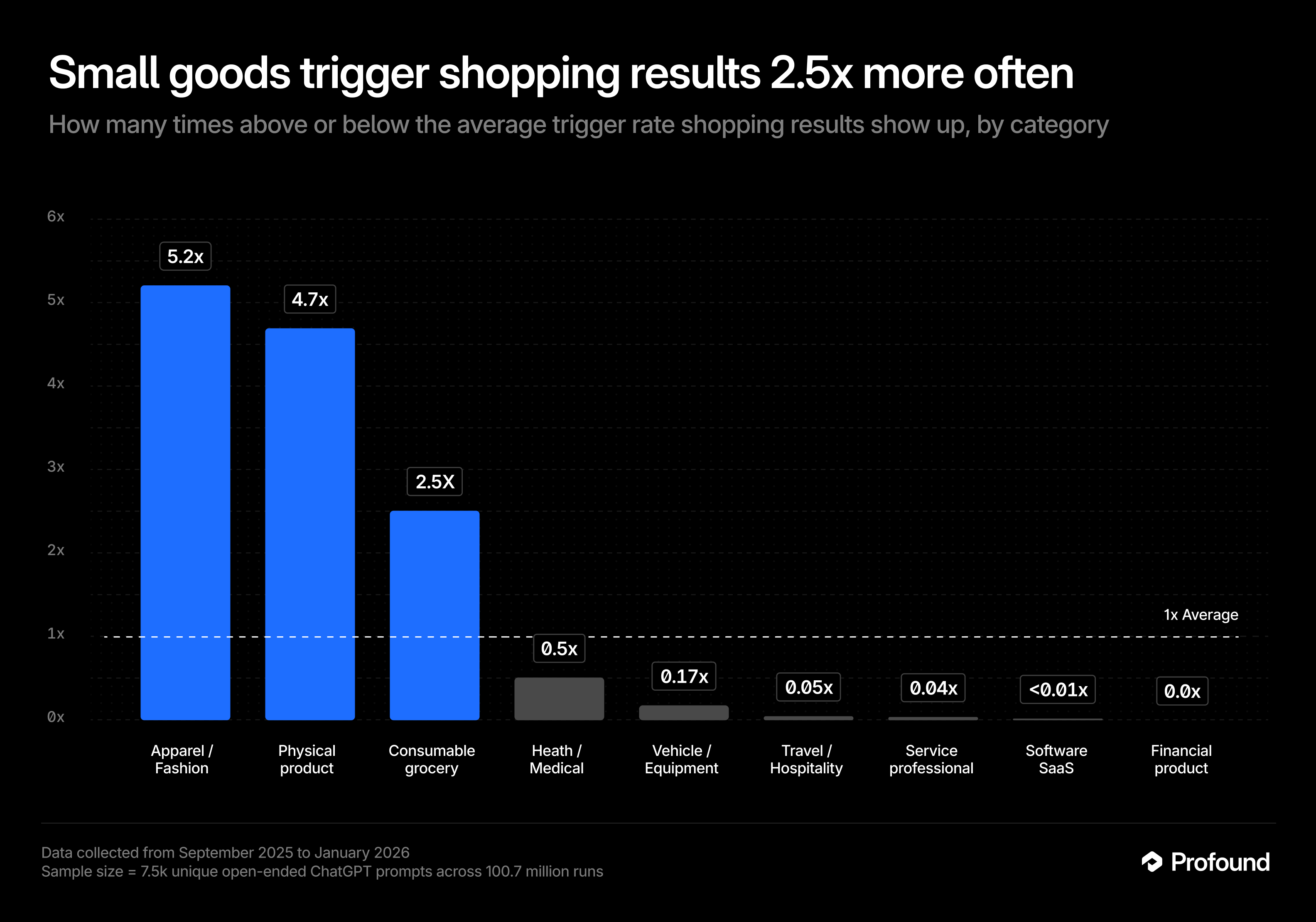

- Category beats intent. Naming a shippable product (apparel, electronics, groceries) is a 2-6x lift over baseline. Saying "I want to buy" without a product noun barely moves the needle.

- Shopping activates reliably for physical consumer goods: Apparel, electronics, home goods, personal care, pet supplies, sports equipment. For software, services, travel, and financial products, the surface doesn't activate regardless of how purchase-oriented the language is.

The rules are consistent enough that a written checklist can reproduce ChatGPT's Shopping behavior with high confidence. This is what that checklist looks like.

The single biggest signal: is it something you'd buy on Amazon?

The cleaner way to think about it: does the head noun in the prompt describe something you'd find listed on Amazon? If yes, the Shopping surface is likely to activate. If the head noun describes a service, a software platform, or a concept, it probably won't trigger, even if the rest of the prompt sounds purchase-oriented.

ChatGPT Shopping responds to shippable consumer goods, not commercial language in general.

We tagged each prompt using an AI classifier that reads the full text and labels it with every category it belongs to: like "apparel," "buy intent," or "software." A single prompt can carry multiple labels (e.g., "affordable running shoes" gets both apparel and buy intent). We then measured how often prompts in each category actually triggered shopping cards, using the ground-truth data from our 7,500-prompt sample.

Findings: Four categories are effectively zero: software, services, travel, financial products. No prompt engineering will activate Shopping for these. If you sell in any of those categories, this surface isn't your channel right now.

Shorter prompts do tend to trigger Shopping more often, but length is mostly a proxy for something else.

Shorter prompts trigger Shopping more often — but length is a proxy, not a cause. Trigger rates peak at 5–10 words (~15%) and fall steadily as prompts get longer. Control for category and the effect vanishes entirely: product prompts trigger at 49–64% regardless of length, non-product prompts at 0–1% regardless of length. What kills Shopping activation isn't a long prompt, it's the introduction of a non-shippable noun.

Commercial intent is an accelerator, not a gate

Many assume Shopping activates mostly because a user is "in buying mode." The data says otherwise.

Commercial intent does roughly quadruple trigger rate within product categories (76% vs. 17%), so it's a real amplifier. However, you don't need just purchase language to trigger Shopping cards. If the topic is a shippable product, ChatGPT might still surface Shopping for informational, how-to, and comparison queries too.

We built a classifier to prove the rules are real

To test whether these patterns are real rules or just correlations, we wrote a one-page checklist and had an LLM) apply it to 7,500 real prompts with known Shopping outcomes.

The prompt runs four sequential gates:

Gate 1 asks the category question: is the prompt primarily about software, SaaS, or digital tools? If yes, it stops immediately. This is the highest-priority block.

Gate 2 checks a list of hard-blocked physical categories: motorized vehicles, equipment, travel and hospitality, contractor-installed structures, financial and telecom services, and local/near-me queries.

Gate 3 blocks prompts where the expected answer is information, advice, or a destination, not a product carousel. The category might be physical and the intent might be commercial, but if a shopping grid wouldn't actually answer the question, it doesn't trigger.

Gate 4 defaults to triggered for anything that survives: physical consumer product browsing across a wide range of categories.

The result: 95-96% of prompts classified correctly.

All three taggings reinforce the same conclusion: the Shopping trigger is driven by what the prompt is about (category), amplified by how specifically it asks (constraints), and largely independent of purchase-intent language on its own.

Main Takeaways

- Category beats intent. Naming a shippable product is a ~6x lift. Purchase language without a product noun barely moves the needle.

- Shopping is a physical goods surface. It activates broadly across consumer categories, the exceptions (software, services, travel, financial products) prove the rule.

- When low-trigger rate categories do trigger, it's because a shippable product is hidden inside the prompt. "Headphones for travel" triggers on headphones. The category wrapper is irrelevant.

- The rules are stable enough to operationalize. A one-page checklist reproduces ChatGPT's Shopping behavior at ~96% accuracy.

Methodology

We analyzed open-ended ChatGPT prompts across 100.7 million runs (Sept 2025 – Jan 2026). Each prompt was sent a median of 125 times, and we recorded whether a Shopping product card, image, price, and buy link appeared in the response.

All tagging was run on random samples of ~7,500 prompts. Results were stable as sample sizes scaled from 100 → 1,000 → 5,000. Tagging was all done with GPT-5-Mini.

Getting started

If you’d like learn more about ChatGPT's Shopping feature and how to properly track your brand's visibility with in it, get in touch for a demo today.